Introducing Hanzi - A Character Decomposition Tool

I’ve been a bit busy lately. Doing lots of coding. One of my personal projects this weekend (which will eventually be used in my research) was to create a Chinese character decomposition tool. I’ve always had this problem. I was never sure how to decompose Chinese characters into their radical components. I set out to solve this problem. Say 你好 to Hanzi.

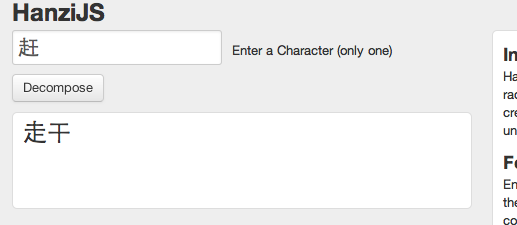

[caption id=”attachment_870” align=”aligncenter” width=”517” caption=”Example of decomposing the character 赶”] [/caption]

[/caption]

To avoid being overly technical:

Hanzi is a Chinese character dictionary lookup (still in development) and radical decomposition module for Node.js.

It was created using the Node.js coding language. This is for the coders and language learners as I think this could benefit many people out there. It is still very early in its development cycle. So it has a few limitations:

1) Can only lookup one character at a time.

2) Only breaks down the character into two parts.

3) Some characters break down into REALLY weird glyphs, which can’t be shown by the average browser.

4) Some character decompositions show numbers instead of their components. This is how the data is structured. It will be solved soon. This is especially apparent in more complex characters and 繁体字 (traditional characters).

5) The decompositions aren’t all radicals. They are “parts” rather than radicals. Well most of it. I will set out to show all the radicals eventually.

6) When decomposing a radical it displays an “undefined” next to it. Will remove it in the next update.

But, hey it’s a start in the right direction. So try it out at HanziJS.com. I still have lots of development to do on this module, but it works good enough already to help you guys out. For more a technical post head to my personal blog.